Now that the data has been expanded and moved, use standard options for reading CSV files, as in the following example: df = ("csv").option("skipRows", 1).option("header", True).load("/tmp/LoanStats3a.

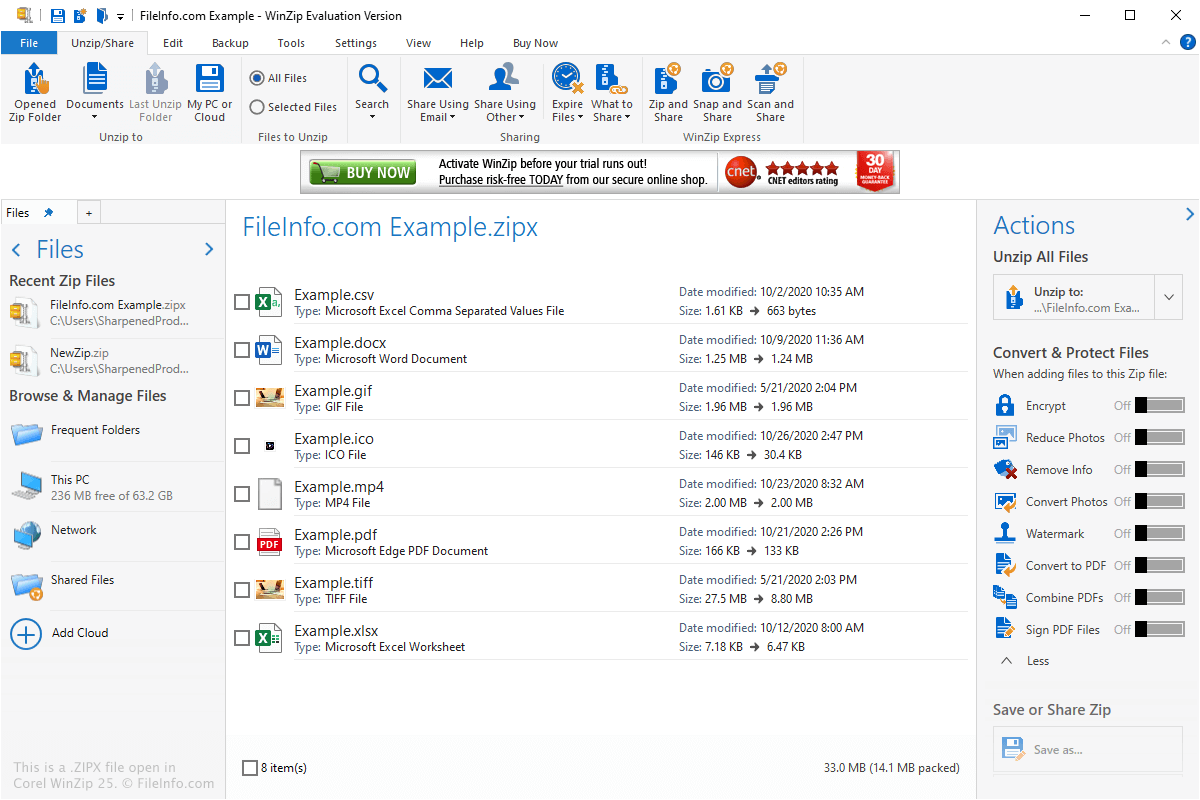

In this example, the downloaded data has a comment in the first row and a header in the second. Use dbutils to move the expanded file back to cloud object storage to allow for parallel reading, as in the following: dbutils.fs.mv("file:/LoanStats3a.csv", "dbfs:/tmp/LoanStats3a.csv") The following code uses curl to download and then unzip to expand the data: %sh curl -output /tmp/ See Download data from the internet and Databricks Utilities. You can also use the Databricks Utilities to move files to the driver volume before expanding them. The following example uses a zipped CSV file downloaded from the internet. The Azure Databricks %sh magic command enables execution of arbitrary Bash code, including the unzip command. The Unarchivers open-source command-line tools are listed as supporting zipx, so one option is. You can use 7-Zip on any computer, including a computer in a commercial organization. Also there is unRAR license restriction for some parts of the code. Some parts of the code are under the BSD 3-clause License. It is considered the next step in the evolution of the ZIP file format. snappy.parquet, indicating they use snappy compression. The most of the code is under the GNU LGPL license. The ZIPX extension is created by WinZip 12.1. By default, Parquet files written by Azure Databricks end with. Apache Spark provides native codecs for interacting with compressed Parquet files.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed